Crime-Predicting AI Fares Worse than Humans in Repeat Offender Study, and It's Racist Too

The 21st century has witnessed AI (Artificial Intelligence) accomplishing tasks like handily defeating humans at chess or instruction them strange languages quickly.

A more avant-garde task for the figurer would exist predicting an offender'due south likelihood of committing some other crime. That's the chore for an AI arrangement chosen COMPAS (Correctional Offender Management Profiling for Alternative Sanctions). But information technology turns out that tool is no better than an average bloke, and can be racist too. Well, that's exactly what a enquiry squad has discovered later on extensively studying the AI system which is widely used past judicial institutions.

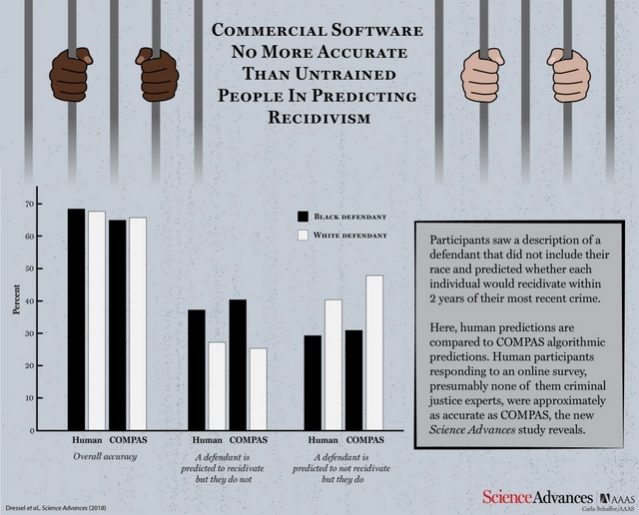

According to a research paper published by Science Advances, COMPAS was pitted against a group of human participants to test its efficiency and check how well information technology fares against the logical predictions made by a normal person. The motorcar and the human participants were provided 1000 test descriptions containing historic period, previous crimes, gender etc. of offenders whose probability of repeat crimes was to be predicted.

With considerably less information than COMPAS (only vii features compared to COMPAS's 137), a small oversupply of nonexperts is as accurate as COMPAS at predicting backsliding.

COMPAS clocked an overall accurateness of 65.four% in predicting recidivism (the tendency of a convicted criminal to re-offend), which is less than the collective prediction accuracy of human participants standing at 67%.

Now just have a moment and reverberate in your mind that the AI system, which fares no meliorate than an average person, was used past courts to predict recidivism.

Commercial software that is widely used to predict recidivism is no more than accurate or off-white than the predictions of people with little to no criminal justice expertise who responded to an online survey.

What'due south fifty-fifty worse is the fact that the organization was found to be just every bit susceptible to racial prejudice every bit its human counterparts when they were asked to predict the probability of backsliding from descriptions which also contained racial data of the offenders. You shouldn't be too surprised there, every bit AI is known to take on the patterns that its human teachers will plan it to learn.

Although both the sides fall drastically curt of achieving an acceptable accuracy score, the point of using an AI tool which is no better than an boilerplate human being beingness raises many questions.

Source: https://beebom.com/ai-worse-humans-repeat-offender-study-racist/

Posted by: blakeneywouturairim.blogspot.com

0 Response to "Crime-Predicting AI Fares Worse than Humans in Repeat Offender Study, and It's Racist Too"

Post a Comment